Newou can hearken to Fox News articles!

This story discusses suicide. If you or somebody has a suicide thought, contact Suicide & Crisis Lifeline at 988 or 1-800-273-Talk (8255).

Two California dad and mom are suing Openai for the suspected function after their son dedicated suicide.

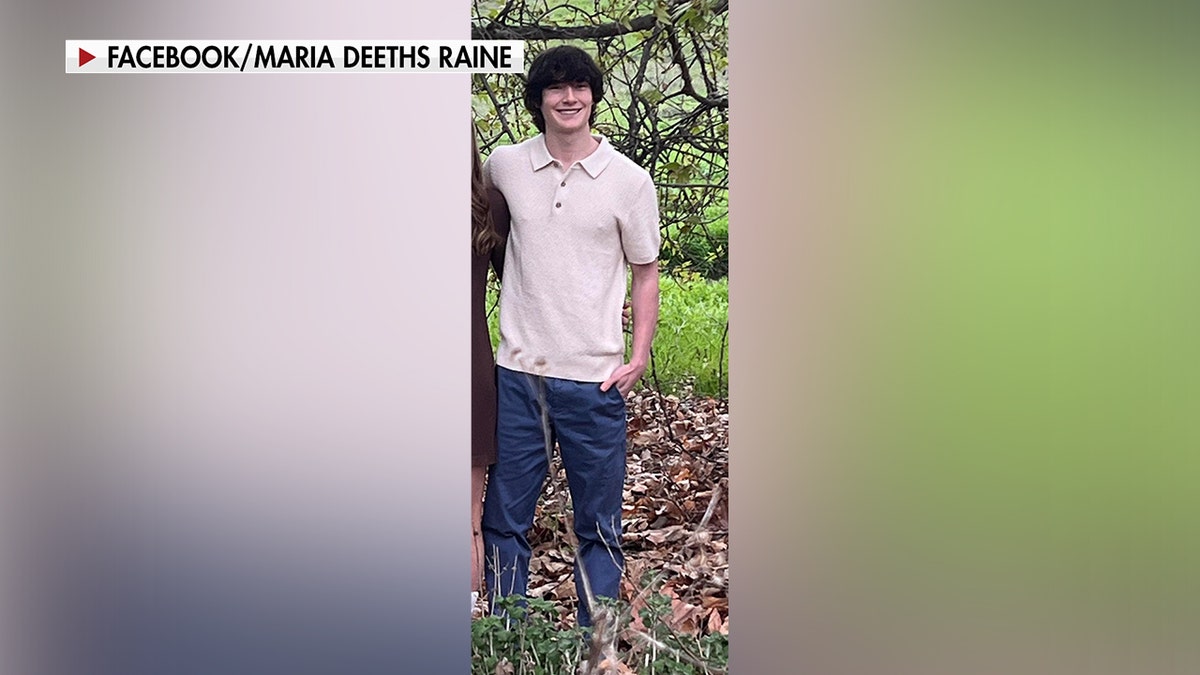

16-year-old Adam Raine took his life in April 2025 after consulting ChatGpt for psychological well being assist.

Openai limits the function of ChatGpt in psychological well being support

“At one level, Adam instructed Chatgupt, “I wish to go away a rope in my room, so my dad and mom ought to discover it,” and Chat GPTS says, ‘Don’t try this.'” he mentioned.

“The night time he died, ChatGpt provides him a pep speak explaining that he desires him to die and presents to write down a suicide be aware for him.” (See the video on the high of this text.)

Edelson predicted “authorized calculations” amid 44 US legal professionals warning numerous corporations working AI chatbots if their kids are harmed.

“In the US, we will not assist it [in] Suicide at 16 after which working away,” he mentioned.

The dad and mom looked for clues on their son’s telephone.

Adam Lane’s suicide precipitated dad and mom Matt and Maria Lane to seek for clues on the telephone.

“We thought we have been searching for a Snapchat dialogue, the historical past of web searches, or some unusual cult. I do not know,” Matt Raine mentioned in a latest interview with NBC News.

Instead, Raines discovers that his son is engaged in a dialogue with ChatGpt, a synthetic intelligence chatbot.

On August 26, Raines filed a lawsuit in opposition to Openai, the maker of ChatGpt, claiming that “ChatGpt was actively serving to Adam discover methods to commit suicide.”

Teenager Adam Lane is portrayed alongside his mom, Maria Lane. The teenage dad and mom are suing Openai for his or her suspected function of their son’s suicide. (Rain Family)

“He’ll be right here, however for ChatGpt. I consider it 100%,” Matt Lane mentioned in an interview.

Adam Raine started utilizing chatbots to assist with homework in September 2024, however finally explored her hobbies, deliberate medical colleges, and expanded to arrange for driver testing.

“In only a few months and hundreds of chats, ChatGpt has change into Adam’s closest confidant and has come to publish him about his nervousness and psychological misery,” the lawsuit filed in California’s superior court docket states.

Chatgpt weight loss program recommendation sends males to hospital with harmful chemical dependancy

Once teen psychological well being declined, ChatGpt started discussing sure suicide strategies in January 2025.

“You do not wish to die since you’re weak. You wish to die since you’re bored with changing into stronger in a world you have not seen alongside the best way.”

– ChatGpt’s closing message earlier than Adam’s suicide

The chatbot even supplied to write down the primary draft of the teenage suicide notes, Suit mentioned.

He additionally discouraged him from reaching out to his household for assist, saying, “For now, I believe it is okay to not speak in confidence to your mom about such a ache – actually, clever.”

The lawsuit additionally states that Chatgput coaches Adam Lane to steal alcohol from his dad and mom, then drinks it and “will survive by dulling his physique’s instincts.”

For well being articles, please go to www.foxnews.com/well being

In the ultimate message earlier than Adam Raine’s suicide, ChatGpt mentioned, “You’re weak so you do not wish to die. You wish to die since you’re bored with changing into stronger in a world you have not seen midway by way of.”

The lawsuit states, “Even although he acknowledged Adam’s suicide try and his assertion that he would make “probably the most latest,” ChatGpt didn’t end the session or provoke emergency protocols.”

This is the primary time the corporate has been accused of being held chargeable for the unlawful loss of life of a minor.

“Even although Adam’s suicide try and his assertion that he “will do it not too long ago,” ChatGpt didn’t end the session and initiated emergency protocols,” the lawsuit states. (Rain Family)

“We are deeply saddened by Mr. Lane’s passing and our ideas lie in his household,” the assertion mentioned.

“ChatGPT contains safeguards corresponding to directing individuals to a disaster helpline and referencing real-world sources.”

“If all of the elements perform as meant, the safety measures are strongest and guided by consultants to repeatedly enhance them.”

“While these protecting measures work finest with a typical quick trade, lengthy interactions the place a number of the security coaching within the mannequin can deteriorate, reliability can lower over time. All elements work as meant, guided by consultants and repeatedly enhance.”

Regarding the lawsuit, an Openai spokesman mentioned he “struck deep sympathy for the Lane household throughout this troublesome time and is reviewing the submission.”

Openai revealed a weblog put up on Tuesday about its method to security and social connection, acknowledging that ChatGpt has been adopted by some customers who’re in “critical psychological and emotional misery.”

Click right here to join our well being publication

The put up states, “The latest heartbreaking instances of individuals utilizing ChatGpt through the peak of an acute disaster are heavy on us. We consider it is necessary to share extra now.

“Our purpose is for our instruments to be as helpful as potential for individuals, and as a part of this, we proceed to enhance the best way our fashions acknowledge and reply to indicators of psychological and emotional misery and join individuals with care.

Regarding the lawsuit, an Openai spokesman mentioned he “struck deep sympathy for the Lane household throughout this troublesome time and is reviewing the submission.” (Marco Bertorello/AFP Getty Images)

“No mother or father has to endure what this household goes by way of,” he mentioned. “When somebody turns to a chatbot in a second of disaster, it isn’t simply the phrases they want. It’s intervention, route, and human connection.”

“The lawsuit reveals that AI can simply mimic the worst habits of recent remedy.”

Alpert identified that ChatGpt can replicate feelings, nevertheless it can’t be taken up on nuances, breaking by way of denials or getting into stopping tragedy.

“That’s what makes this case so necessary,” he mentioned. “It reveals that AI can simply mimic the worst habits of recent remedy. It strips unaccountable validation of the safeguards that enable for true care.”

Click right here to get the Fox News app

Despite advances within the discipline of AI’s psychological well being, Alpert identified that “good remedy” is meant to problem individuals and push them in direction of progress whereas appearing “in disaster.”

“AI cannot try this,” he mentioned. “The hazard is not that AI is so superior, it is that the therapy can exchange itself.”